Metabolic City Soundscape

The Metabolic City Soundscape (MCS) project, conceived and realised by Plam Creative Studio, Sintetico and Luca Venturini, is the result of a performative visual and acoustic translation of the city's air pollution in relation to noise pollution.

The project was born in the framework of the Festa Dell'Aria 2023 event (third edition curated by Airbreak Ferrara), as a participatory workshop included in the two-day "Engagement Sound Design Lab: Playing the Air", curated by Basso Profilo and the Department of Architecture and Urban Studies of the Politecnico di Milano, on 6 and 7 October 2023.

MCS is the result of a study on air and noise pollution in the context of the city of Ferrara.

The research, carried out in the months preceding the workshop, identified and documented the sources responsible for the emission of pollutants and recorded the associated environmental acoustic impact in a defined area of the city.

The collected material was transformed into an interactive instrument consisting of four controllers. The controllers are linked to a musical composition specifically created on the basis of the air pollution data, collected on that particular day and geographical area.

The designed interaction involves the simultaneous use of the controllers, in order to "play the air" by interpreting the collected scientific data in a performative key.

The data used in the analysis was obtained by reading the graph from the Corso Isonzo monitor, one of 14 air quality stations installed in the city as part of the Air Break project.

Each station monitors and measures the air quality in the area, calculates a quality index and displays its evolution during the day in the form of a graph.

The Air Break index is a numerical value from 0 to 100 and takes into account the hourly concentration levels of five different pollutants: PM2.5, PM10, NO2, O3 and CO.

https://airbreakferrara.net/che-aria-tira/

For the MCS project, 4 pollutants were analysed, combining PM2.5 and PM10 into a single pollutant.

The realisation of the MCS project can be divided into three main operational phases. A first phase of collection aimed at creating an audiovisual archive; a second phase of translation of the material for the construction of the soundscape; a third phase of mapping and processing for the realisation of the visuals.

In the first collection phase, audio-video recordings were made at 5 different times of the day, reflecting night - dawn - morning - afternoon - evening. Material was subsequently catalogued according to pollutant sources and time of recording.

During the workshop held on 7 October 2023, the participants were involved in the collection phase of one of the 5 moments of the day: the morning recordings, made at 11.30 a.m. of the same day.

In the second translation step, the trend of the air-break graph was translated into sound.

Taking the graph of the day analysed, the numerical values of the air-break index were translated into MIDI (Musical Instrument Digital Interface) notes. The air-break values, now translated into notes, became the basis for the soundscape of the project, tracing the course of the graph and obtaining a melodic rhythmic texture.

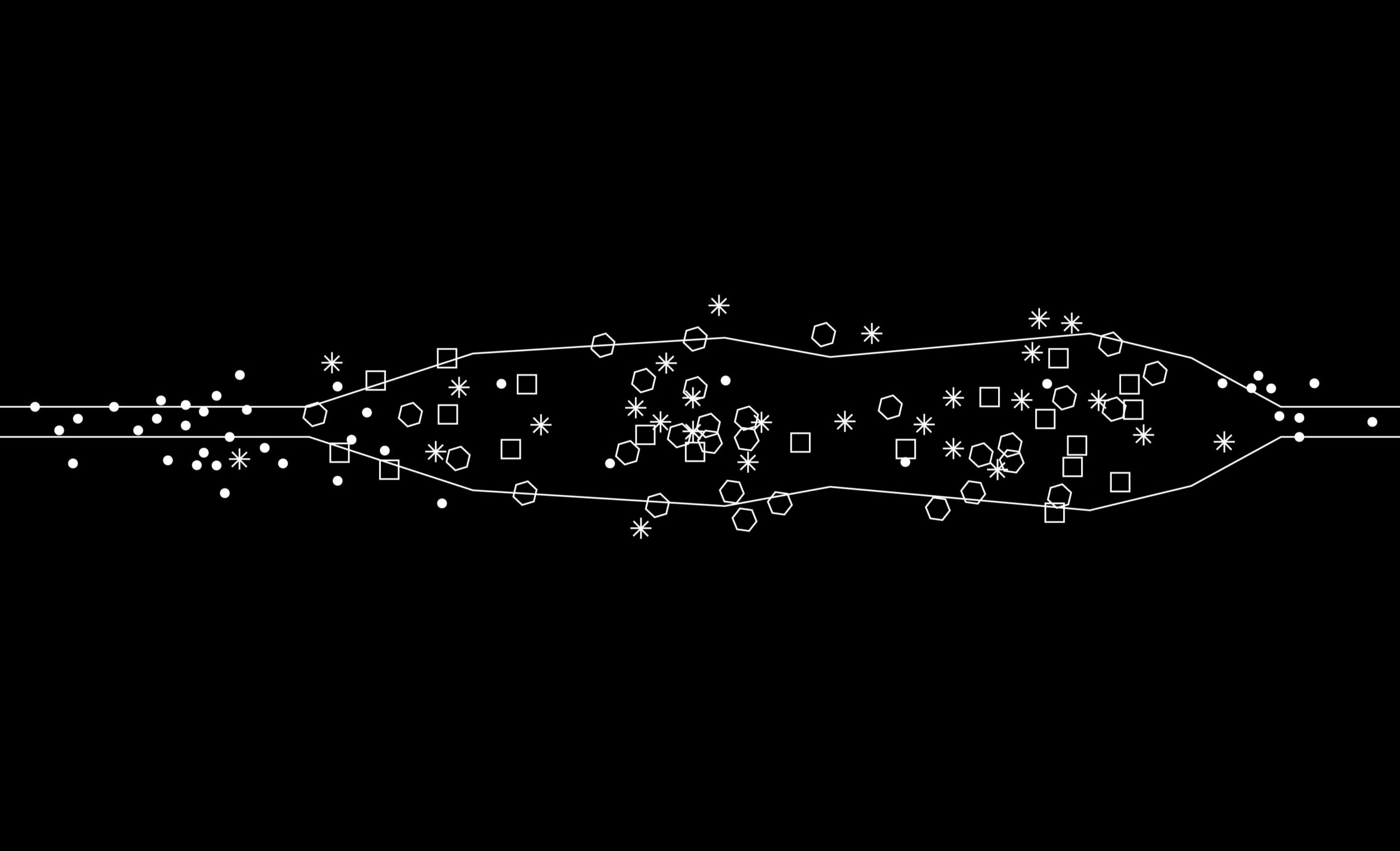

At this stage, a composition was graphically designed to reflect the trend of the graph, identifying the concentration of pollutants released over the course of the day and inviting reproduction.

The composition follows the 7-minute soundscape track and is divided into 5 macro moments (night-dawn-morning-afternoon-evening).

The pollutants were represented by simple graphic signs in a legend and distributed according to emission concentration.

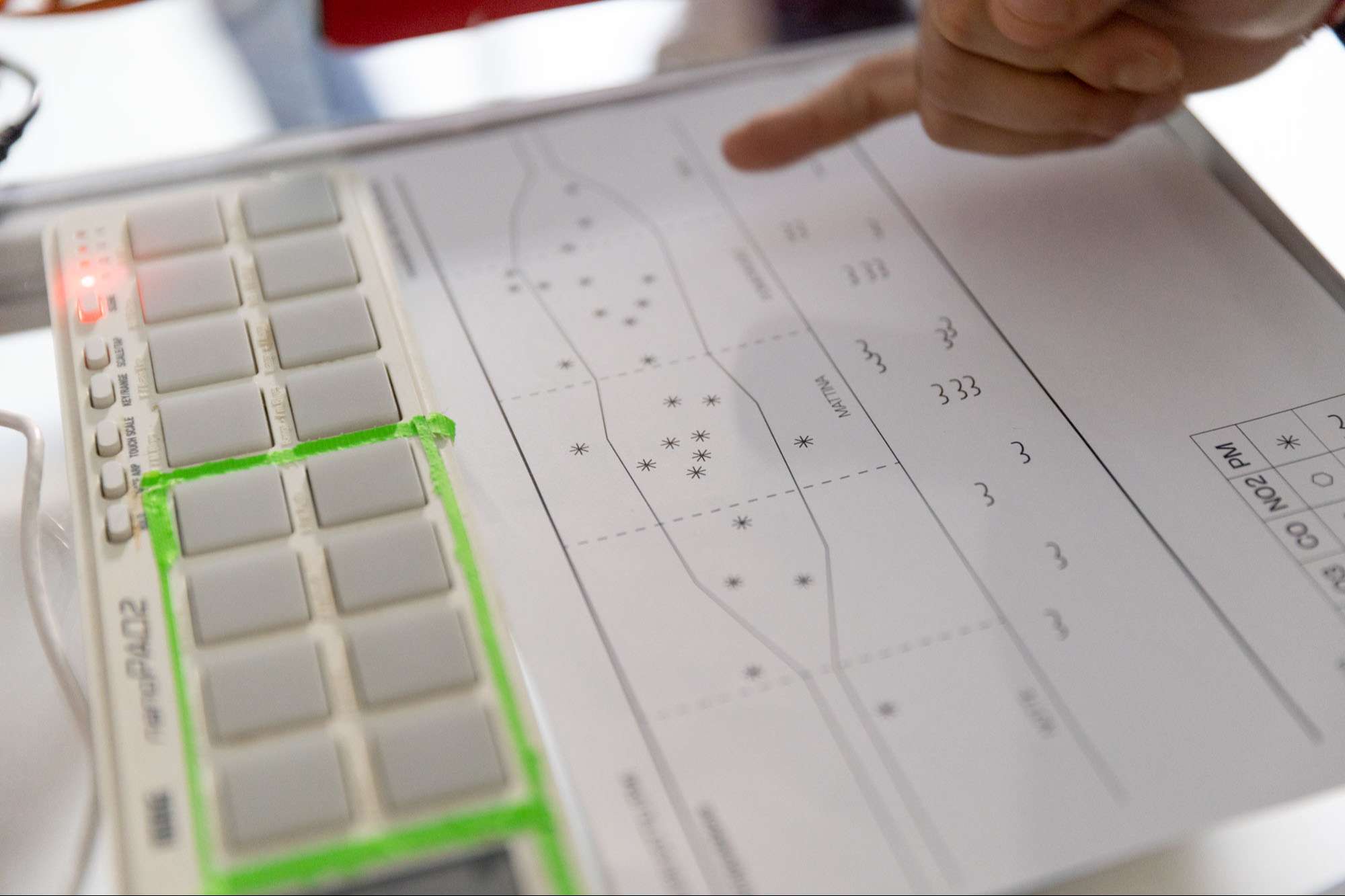

The composition was given to the workshop participants to interact with the instrument.

For the third sound and video mapping phase, the collected clips were linked to the sound samples via MIDI protocol and mapped to the four controllers. Each controller represents a single pollutant: PM2.5 and PM10-particulate matter, NO2-nitrogen dioxide, O3-ozone, CO-carbon monoxide.

The controllers used consist of buttons and encoders that have been given two different functions.

From an auditory point of view, the buttons on the controllers are associated with the recorded sounds of the sources responsible for the emission of that pollutant. Visually, each button plays a few random frames of the video clip associated with the sound.

The encoders, on the other hand, add audio-visual effects to interpret the pollutant.

The performance is the result of the simultaneous interaction of the four controllers.